Small model training and generation using Encodec. Plays in real time in Python on a cpu with interactive parameteric control (RT for the web coming soon).

Pouring and "unpouring" water in a metal cup

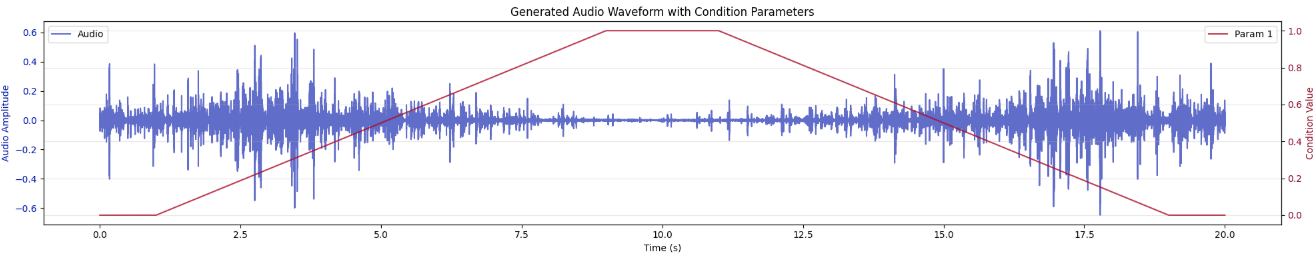

Synthesis driven by parameters only

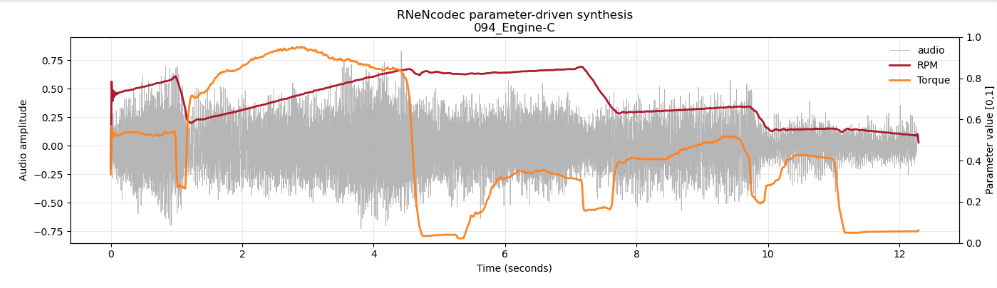

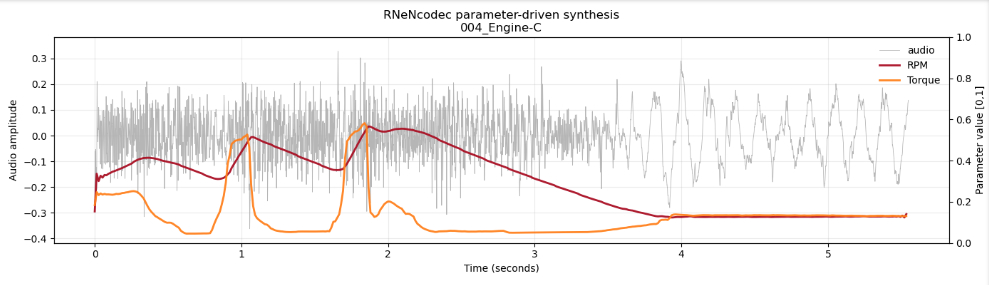

Driving Engine sounds from RPM and Torque

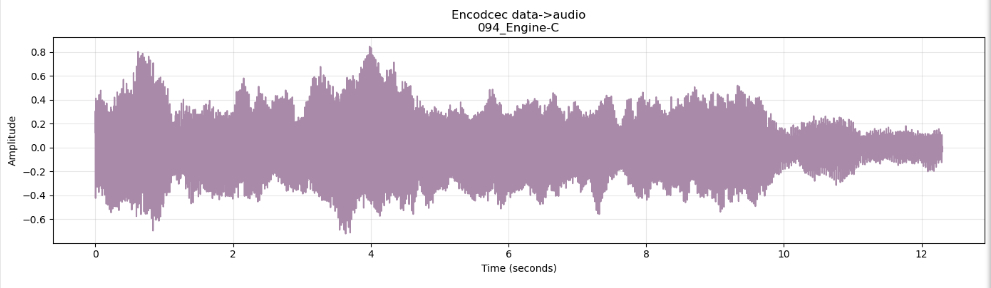

Original

Resynthesis driven by parameters only

Driving Engine sounds from RPM and Torque

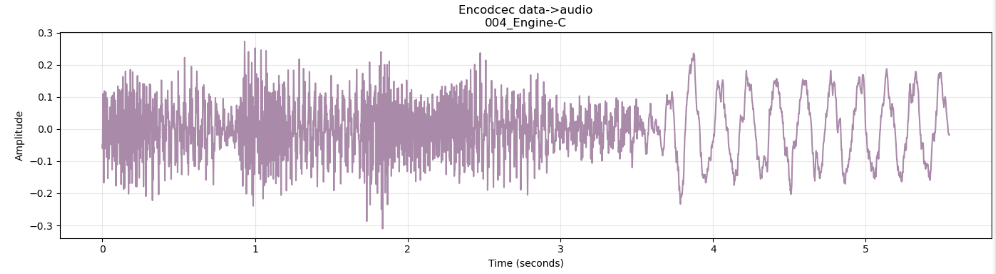

Original

Resynthesis driven by parameters only

And some real-time interaction

(The RNeNcodec model is running on an Intel CPU: i7-14700K)Sound classes are selected by "1 Hot" parameters, and controled by param (real)